|

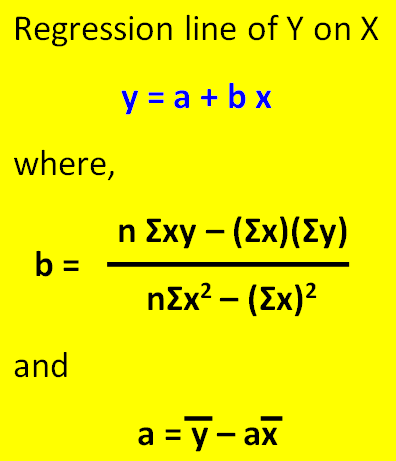

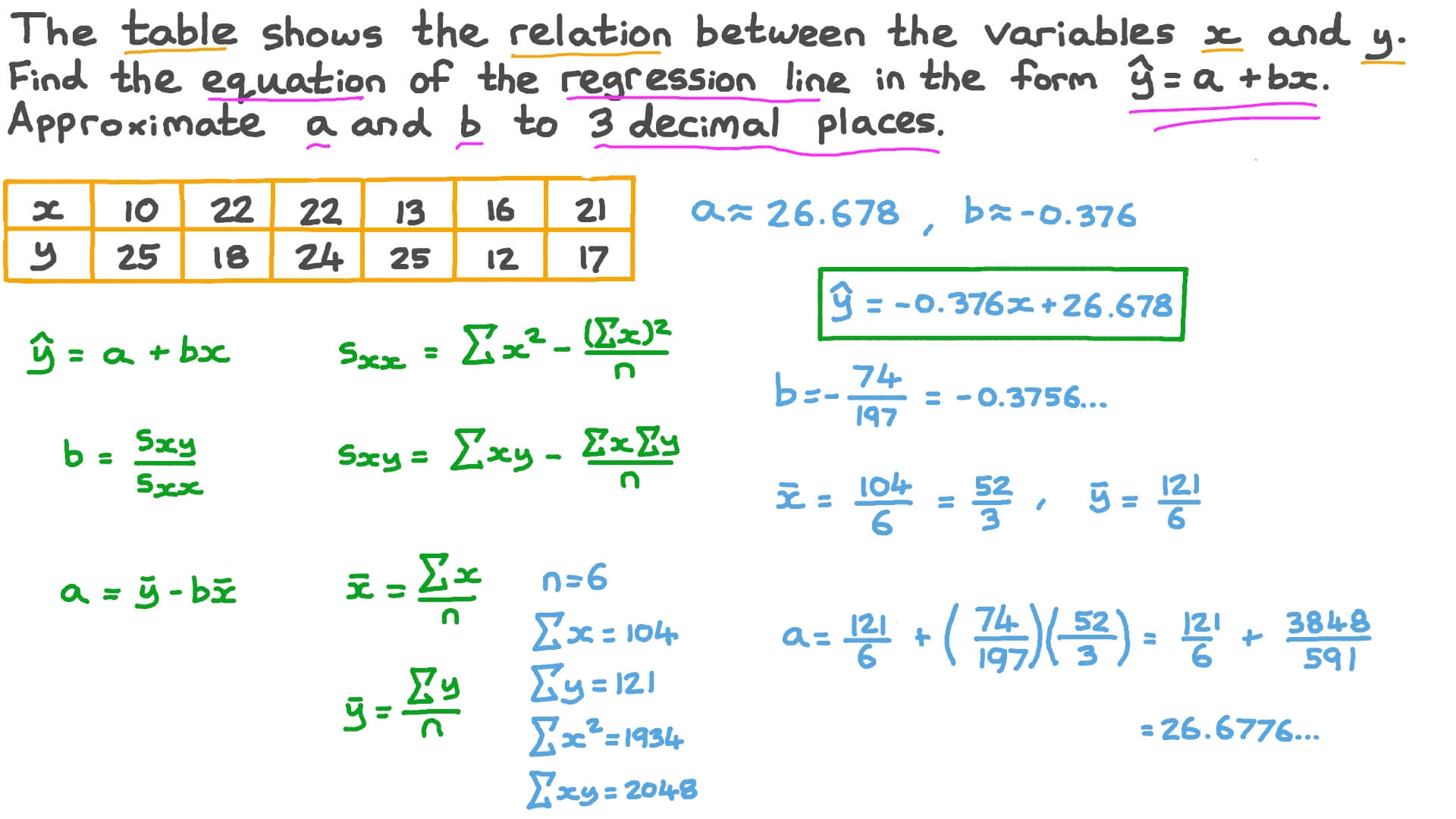

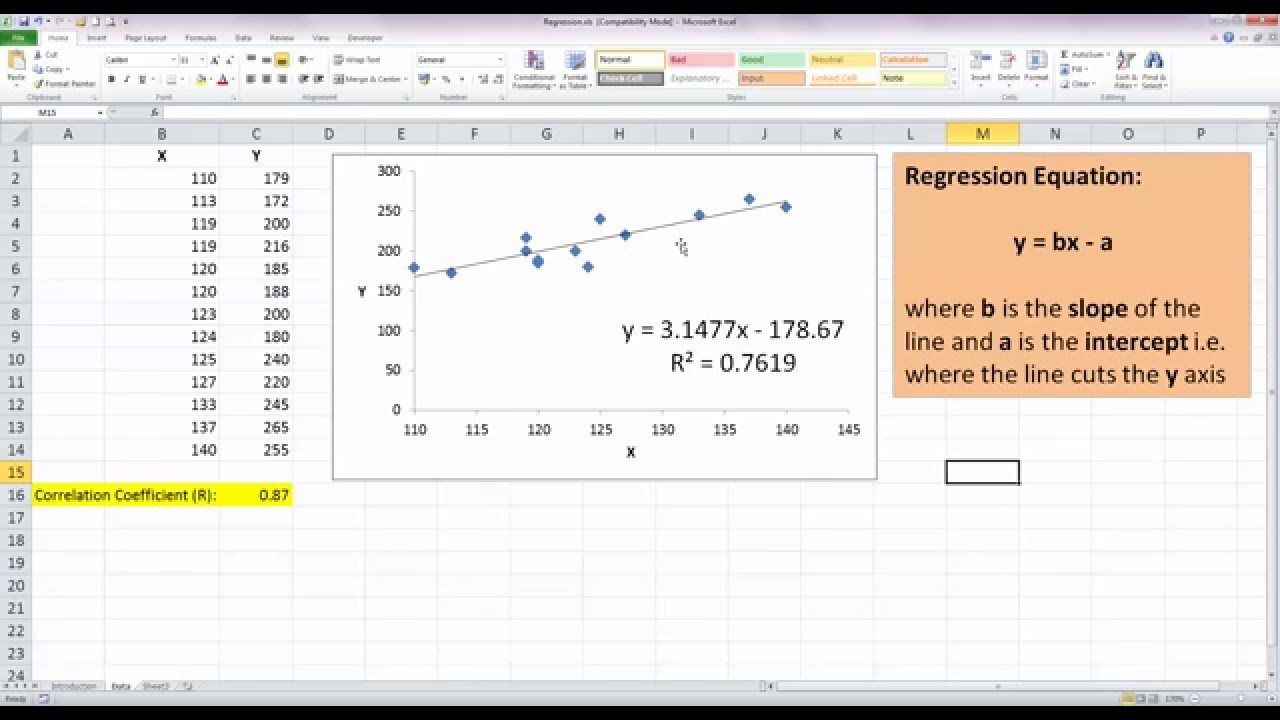

If each of you were to fit a line by eye, you would draw different lines. We will plot a regression line that best fits the data. The goal of the algorithm is to find the best Fit Line equation that can predict the values based on the independent variables.The third exam score, x, is the independent variable, and the final exam score, y, is the dependent variable. Elastic Net Regression – Elastic net regression combines the penalties of ridge and lasso regression, offering a balance between their strengths.The equation for lasso regression becomes: Lasso Regression – Lasso regression is another regularization technique that uses an L1 penalty term to shrink the coefficients of less important independent variables towards zero, effectively performing feature selection.

The equation for ridge regression becomes: It introduces a penalty term to the least squares objective function, biasing the model towards solutions with smaller coefficients. Ridge Regression – Ridge regression is a regularization technique used to prevent overfitting in linear regression models, especially when dealing with multiple independent variables.It is represented by the general equation: Polynomial Regression – Polynomial regression goes beyond simple linear regression by incorporating higher-order polynomial terms of the independent variable(s) into the model.X1, X2, …, Xp are the independent variables The equation for multiple linear regression is: This involves more than one independent variable and one dependent variable. Additionally, linear regression is a cornerstone in assumption testing, enabling researchers to validate key assumptions about the data. Techniques like regularization and support vector machines draw inspiration from linear regression, expanding its utility. Linear regression is not merely a predictive tool it forms the basis for various advanced models. Its simplicity is a virtue, as linear regression is transparent, easy to implement, and serves as a foundational concept for more complex algorithms. The model’s equation provides clear coefficients that elucidate the impact of each independent variable on the dependent variable, facilitating a deeper understanding of the underlying dynamics. The interpretability of linear regression is a notable strength. When the number of the independent feature, is 1 then it is known as Univariate Linear regression, and in the case of more than one feature, it is known as multivariate linear regression. Linear regression is a type of supervised machine learning algorithm that computes the linear relationship between a dependent variable and one or more independent features. Python Implementation of Linear Regression.Evaluation Metrics for Linear Regression.Assumptions of Multiple Linear Regression.Assumptions of Simple Linear Regression.Software Engineering Interview Questions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed